Whitepapers

ATSC 3.0 brings a lot of promise to broadcasters. This includes everything from incredible pictures and sound to second screen and targeted advertising, which, for the first time, closes the loop for over-the-air broadcasters. Transitioning to ATSC 3.0 will not be a trivial feat, and it is unlikely that anyone will “turn on” every feature it has to offer on day one. How much time will it take to verify the 20 new standards against the 20 vendors all trying to play well together? What is the safest path to replicating the typical 1 HD and 3 SD in MPEG2, SMPTE310/ASI, and 8-VSB to 4 HD in H.265, S2022-1/IP, and COFDM, much less all the additional use cases offered? This paper will peel the many layers of ATSC 3.0, and provide a method of real-time and historic verification, which, like ATSC 3.0 itself, closes the loop for a much faster and safer transition.

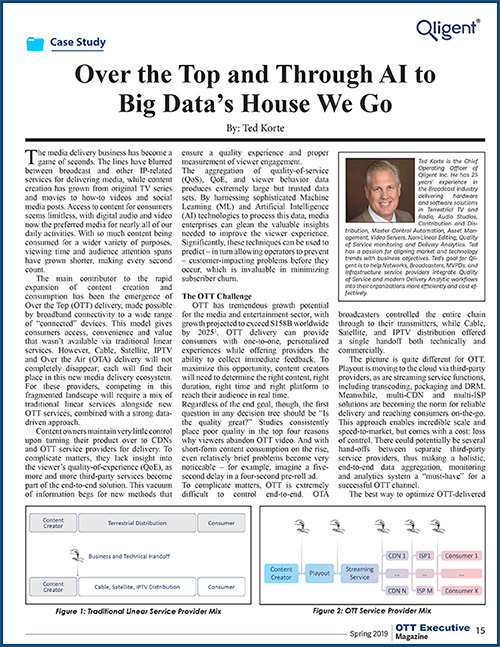

The media delivery business has become a game of seconds. The lines have blurred between broadcast and other IP-related services for delivering media, while content creation has grown from original TV series and movies to how-to videos and social media posts. Access to content for consumers seems limitless, with digital audio and video now the preferred media for nearly all of our daily activities. With so much content being consumed for a wider variety of purposes, viewing time and audience attention spans have grown shorter, making every second count.

Content owners maintain very little control upon turning their product over to CDNs and OTT service providers for delivery. To complicate matters, they lack insight into the viewer’s quality-of-experience (QoE), as more and more third-party services become part of the end-to-end solution. This vacuum of information begs for new methods that ensure a quality experience and proper measurement of viewer engagement.

The best way to optimize OTT-delivered content is to start with high-quality delivery to a target audience, and respond to feedback in real-time. To achieve this, many are looking toward new technologies – most notably, AI.

Content owners maintain very little control upon turning their product over to CDNs and OTT service providers. To complicate matters, they lack insight into the viewer’s quality-of-experience (QoE) as more and more 3rd party services become part of the end-to-end solution. A common question is, “What is actually coming out the other end?” This vacuum of information begs for new methods that ensure a quality product and proper measurement of viewer engagement. The aggregation of QoS, QoE, and viewer behavior data produces extremely large, but trusted data sets. Processing this data with sophisticated machine learning (ML) and Artificial Intelligence (AI) technologies is proving to be very effective in extracting maximum value from the content – and advertising. This paper will explore emerging technologies and best practices for harnessing the power of cloud computing, AI, and massive datasets to improve the viewer experience and maximize revenue from every viewing platform.

If great content is all it takes to be successful, why are so many end users still “cutting the cord?” Having great content is only part of the competitive battle for providers these days as price, accessibility, relevance, personalization, quality, choice and more have all become critical factors to keeping viewers engaged. A new paper by Ted Korte talking about the importance of quality in a new highly-competitive interactive television market and sharing experience of implementing BigData SQM platform for the large Quad-Play Telco operator.

While always welcome, new technology has caused a great deal of disruption to the media and entertainment industry in recent years. Viewers have more options than ever of what, when, where, and how to consume content; Vendors are virtualizing functions from Production to Playout to Archive; new infrastructures such as Content Delivery Networks (CDN) and Software Defined Networks (SDN) are evolving; non-traditional players are making huge in-roads into the media and entertainment market; and anyone can reach a global audience these days. Professional content and professional quality still separates the winners from the rest of the pack. This paper presents how early planning and adoption of virtualized functions, Cloud-based workflows and data analytics will lead toward a big data ecosystem. This paper focuses on one of the fairly recent but more difficult workflows to virtualize: Quality of Service (QoS) and Quality of Experience (QoE) monitoring, and end-to-end delivery verification.